Children’ digital lives are flush with optimistic experiences, but additionally with very actual dangers — a few of that are rising so quick, society is racing to understand them.

But it’s important that we do. By understanding the dangers kids face on-line, we are able to develop methods to guard them in opposition to the harms launched by quickly advancing applied sciences.

Since 2019, Thorn’s methodical analysis initiatives have helped us perceive and monitor youth behaviors, experiences and dangers on-line. A pillar of these initiatives, our annual Youth Monitoring Report now options 5 years of constant knowledge assortment, offering us with a robust big-picture view of how kids have been navigating the digital panorama over time.

Working immediately with youth, our analysis group investigates younger folks’s attitudes and experiences with on-line grooming, sharing nudes, nonconsensual reshares, and whether or not they disclose their on-line sexual experiences. With this knowledge, we are able to construct an understanding of the State of the Situation to tell our efforts and people of our companions within the youngster security ecosystem.

This yr’s report, Youth Views on On-line Security, 2023 reveals that some areas of concern persist at related charges, whereas others — such because the rising risk of youth creating deepfake nudes of friends — are rising as shortly because the applied sciences that allow them.

What this yr’s knowledge reveals about youth on-line security:

As we have a look at 2023’s findings in relation to the earlier years, we see that:

Many youth on-line attitudes and behaviors stay fixed, resembling navigating sexual interactions with adults and self-generated youngster sexual abuse materials (SG-CSAM).

On the similar time, the highly effective capabilities and efficiencies of AI are resulting in alarming new behaviors and dangers.

Younger persons are utilizing generative AI to create deepfake nudes of friends

Over the past 5 years, we’ve monitored the speed at which minors shared specific imagery of their friends with out consent. In 2023, 7% of minors reported they’d reshared another person’s sexual photographs, but practically 20% reported that they had seen nonconsensually reshared intimate photographs of others.

Importantly, the strategies being utilized by youth to provide and share specific imagery are evolving. With generative AI instruments at their fingertips, younger persons are creating deepfake nudes of their friends. Whereas nearly all of minors don’t consider their classmates are collaborating on this habits, roughly 1 in 10 minors reported they knew of instances the place their friends had executed so.

The velocity and effectivity of AI applied sciences permits mass manufacturing of those faux nude photographs and supplies a brand new weapon for bullying.

Younger persons are persevering with to have dangerous encounters on-line

In 2023, greater than half (59%) of minors reported they’ve had a doubtlessly dangerous on-line expertise, and greater than 1 in 3 minors reported they’ve had a web-based sexual interplay. One in 5 preteens (9-12-year-olds) reported having a web-based sexual interplay with somebody they believed to be an grownup.

In keeping with earlier years, 1 in 4 minors agree it’s regular for folks their age to share nudes with one another, and 1 in 7 minors admit to having shared their very own specific imagery (SG-CSAM).

Amongst minors who’ve shared their very own SG-CSAM, 1 in 3 reported having executed so with an grownup.

Youngsters view platforms as key in serving to them keep away from and defend in opposition to threats

Youth proceed to depend on in-platform security instruments. Minors who had a web-based sexual interplay have been practically twice as seemingly to make use of on-line reporting instruments moderately than search offline help, resembling from a caregiver or a pal.

But, 1 in 6 minors who skilled a web-based sexual interplay didn’t disclose their expertise to anybody. This knowledge level stays regular and underscores the necessity for higher youth-friendly security instruments in addition to offline training that encourages judgment-free conversations between caregivers and youth.

Minors are navigating monetary threats in some on-line sexual interactions

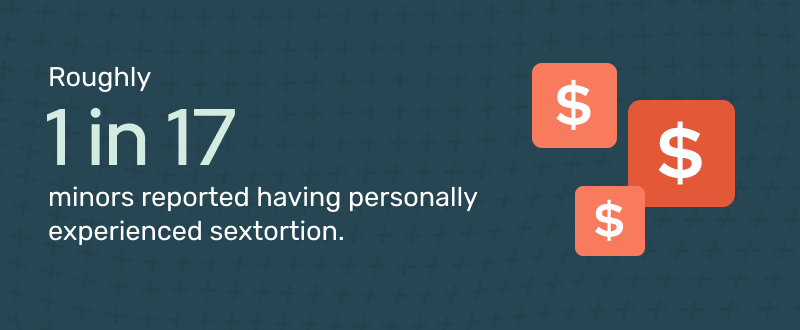

In 2023, a brand new query was added to the survey to evaluate the dimensions of sextortion amongst minors. Sextortion is the risk to leak specific imagery depicting the sufferer if they don’t adjust to calls for. In an adjoining intensive analysis effort, Thorn targeted particularly on this rising development, uncovering how monetary sextortionists are concentrating on primarily teenage boys.

Our youth surveys confirmed these occurrences — 1 in 17 minors reported having personally skilled sextortion.

Schooling stays important to conserving youngsters protected

The insights from this yr’s report illuminate the vulnerabilities of youth on-line and stress the urgency of evolving training, consciousness and prevention packages that match the evolving panorama.

As youngsters proceed to discover sexual experiences by the expertise at their fingertips, sharing nudes will seemingly proceed to spark pure curiosity. It’s crucial that we all know how new instruments, like generative AI, affect younger folks’s actions. At Thorn, we’re devoted to ongoing analysis that retains our group and people empowered to guard kids knowledgeable of those current and rising dangers.

discuss to kids about stopping deepfake nudes

Pretend photographs of every kind have accelerated with deepfake-generating AI instruments and society is scrambling to reply. Thorn’s new dialogue information on deepfake nudes helps mother and father perceive the difficulty and affords steerage for speaking to kids in regards to the risks of resharing nudes, together with the significance of on-line and app security.

Take a look at Navigating Deepfake Nudes: A Information to Speaking to Your Baby About Digital Security for sources and dialog starters.

Thorn is on a mission to defend kids from sexual abuse and our analysis performs a key position in that quest. Your help is important to making sure this work continues. Please be a part of us and assist us construct a safer digital world for our children.